CanIRun.ai

Hardware compatibility checker for seeing which AI models your machine can realistically run locally

Code workflows and buyers comparing CanIRun.ai against direct alternatives.

CanIRun.ai is completely free to use, which makes it easier to test before committing to a larger workflow or team rollout.

Use CanIRun.ai if you specifically need checks whether local hardware can run specific models and estimates model fit from browser-detected specs inside a code workflow. Skip CanIRun.ai if your main priority is broader all-in-one coverage, the lowest possible cost, or a workflow outside code.

About CanIRun.ai

CanIRun.ai helps users figure out which local AI models their hardware can handle by estimating memory and runtime fit directly from the browser. It is useful for developers and hobbyists evaluating local inference before downloading or configuring large models.

CanIRun.ai Pricing and Value

CanIRun.ai is completely free to use, which makes it easier to test before committing to a larger workflow or team rollout.

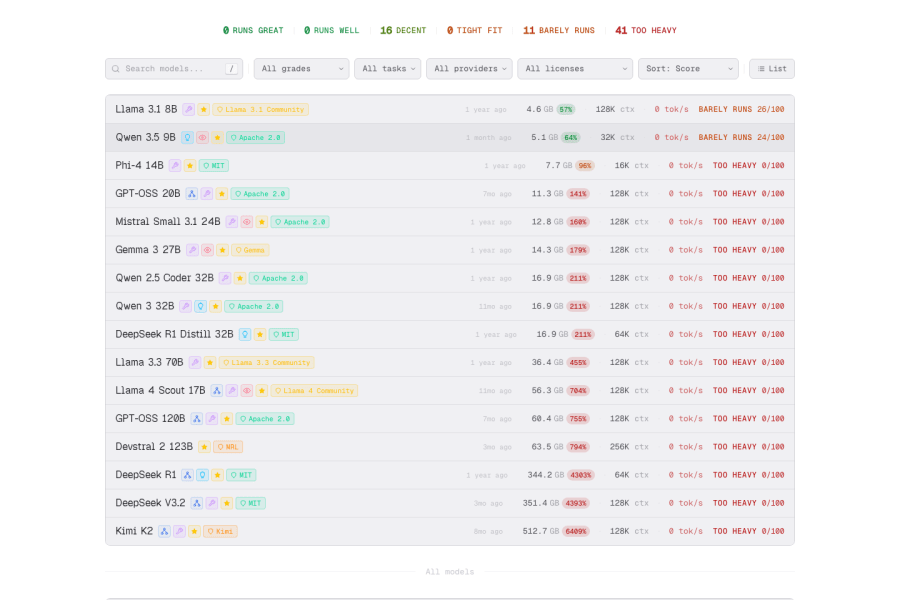

CanIRun.ai Screenshots

Key Features of CanIRun.ai

Best Use Cases for CanIRun.ai

PROSof CanIRun.ai

- +Code focus is immediately clear from the feature set.

- +Low barrier to entry for trying the product.

- +Checks whether local hardware can run specific models gives the product a concrete primary use case.

- +Still differentiated enough to stand out in a crowded market.

CONSor Limitations

- −Free access does not always mean the best limits, support, or export quality.

- −CanIRun.ai may be a weak fit if you need much broader workflows outside code.

- −Feature lists alone do not guarantee output quality, so real workflow testing still matters.

- −Smaller review volume means buyers may need extra validation before committing.

Who Should Use CanIRun.ai?

- •Teams or solo operators who need code output regularly, not just occasionally.

- •Users who want low-friction adoption without a budget approval step.

- •Anyone whose workflow maps closely to checks whether local hardware can run specific models and estimates model fit from browser-detected specs.

Use CanIRun.ai if you specifically need checks whether local hardware can run specific models and estimates model fit from browser-detected specs inside a code workflow.

Skip CanIRun.ai if your main priority is broader all-in-one coverage, the lowest possible cost, or a workflow outside code.

Top Alternatives to CanIRun.ai

If CanIRun.ai is not the right fit, these alternatives are the closest matches in code workflows and are worth comparing side by side.

Explore More Code AI Tools

Users comparing CanIRun.ai usually also look at more code tools, pricing models, and alternatives across the same category.

Frequently Asked Questions about CanIRun.ai

What is CanIRun.ai?

CanIRun.ai is a free code AI tool by CanIRun.ai. CanIRun.ai helps users figure out which local AI models their hardware can handle by estimating memory and runtime fit directly from the browser. It is useful for developers and hobbyists evaluating local inference before downloading or configuring large models.

Is CanIRun.ai free?

Yes, CanIRun.ai is completely free to use.

What can you do with CanIRun.ai?

CanIRun.ai is used for code tasks including: checks whether local hardware can run specific models, estimates model fit from browser-detected specs, useful for local ai planning and comparison.

Who made CanIRun.ai?

CanIRun.ai was created by CanIRun.ai and launched in 2026.

What are the best alternatives to CanIRun.ai?

Top alternatives to CanIRun.ai include GitHub Copilot, Cursor, Replit, Emergent — all available on aitoolcity.