Audio AI Tools

49 toolsVoice, music and audio AI

Showing 49 tools

What are audio AI tools?

Audio AI tools have quietly matured into one of the most practically useful corners of the AI ecosystem. A category that once meant novelty voice effects now covers serious production workflows: generating realistic voiceovers in dozens of languages without a recording studio, cloning a presenter's voice so they can "re-record" lines by typing rather than re-entering the booth, composing full original music tracks from a text description in seconds, removing background noise from a podcast recorded in a kitchen, and editing a 90-minute interview by deleting sentences from a transcript rather than scrubbing through a waveform. These tools serve a wide range of users: solo creators who cannot afford a voice actor, global businesses producing multilingual content at scale, podcast producers trying to reduce post-production time, game developers who need ambient music on a budget, and anyone who has stared at a blank audio timeline wondering where to start.

Audio is harder to produce than text and more expensive to produce than most people expect. A single decent voice recording session, properly edited and mixed, used to take hours and cost significantly more than most content budgets allowed. AI audio tools have collapsed that cost curve — not to zero, but to a fraction of what it was. For businesses producing training content in multiple languages, that means localization at scale without a full studio operation. For creators, it means publishing consistently without being bottlenecked by production. For developers, it means building voice interfaces and audio features without a dedicated audio engineering team.

How to choose the best audio AI tool for your production workflow

Common questions about audio AI tools

What are AI audio tools most useful for in real production work?

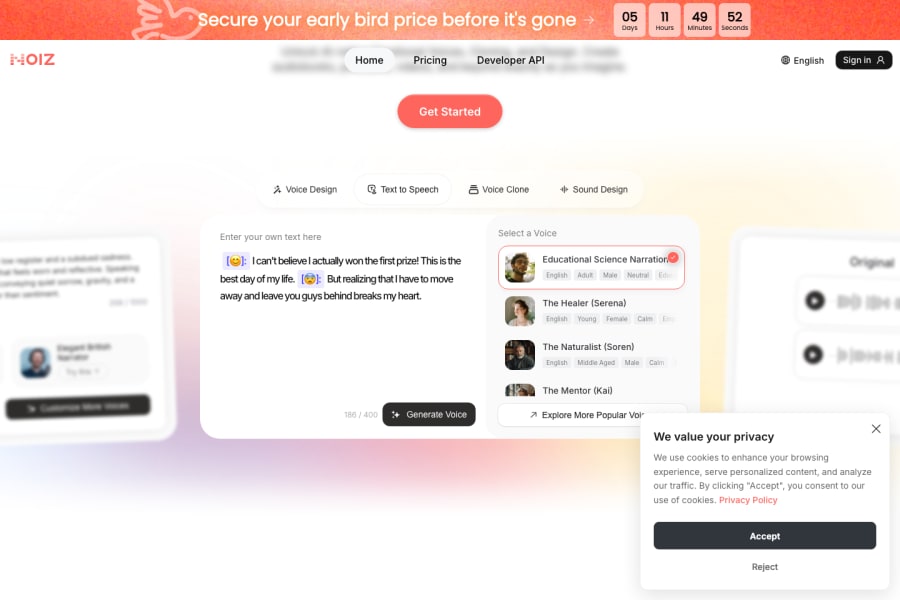

The highest-value use cases are: generating voiceovers for videos, explainers, and training content without booking a voice actor; creating music and sound effects for content, games, and apps; editing podcasts and interviews by working from a transcript rather than a timeline; fixing recorded audio by removing background noise, filler words, and mistakes; dubbing video content into multiple languages with lip-synced voice; and building voice interfaces into products and applications. Most professional users find one category where the time savings are significant and start there.

How realistic is AI voice cloning in 2026?

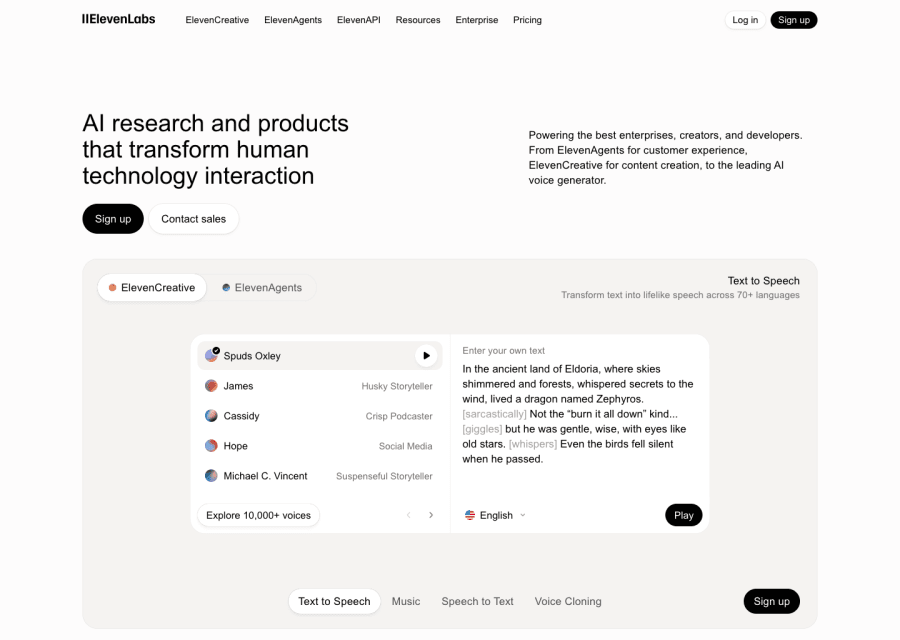

Significantly more realistic than most people expect. Tools like ElevenLabs, PlayHT, and Resemble AI can produce voice clones that are genuinely difficult to distinguish from the original speaker in normal listening conditions, particularly for narration and neutral speech. Emotional range, spontaneous conversational speech, and edge cases involving accent or unusual vocabulary are still weaker points. For most production use — explainers, training content, narration, corporate video — the quality is more than adequate.

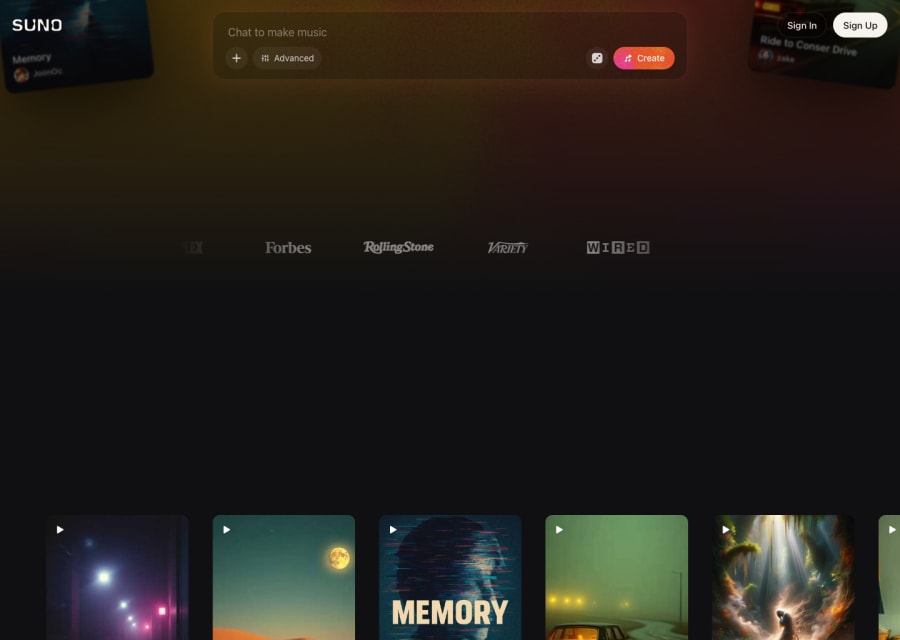

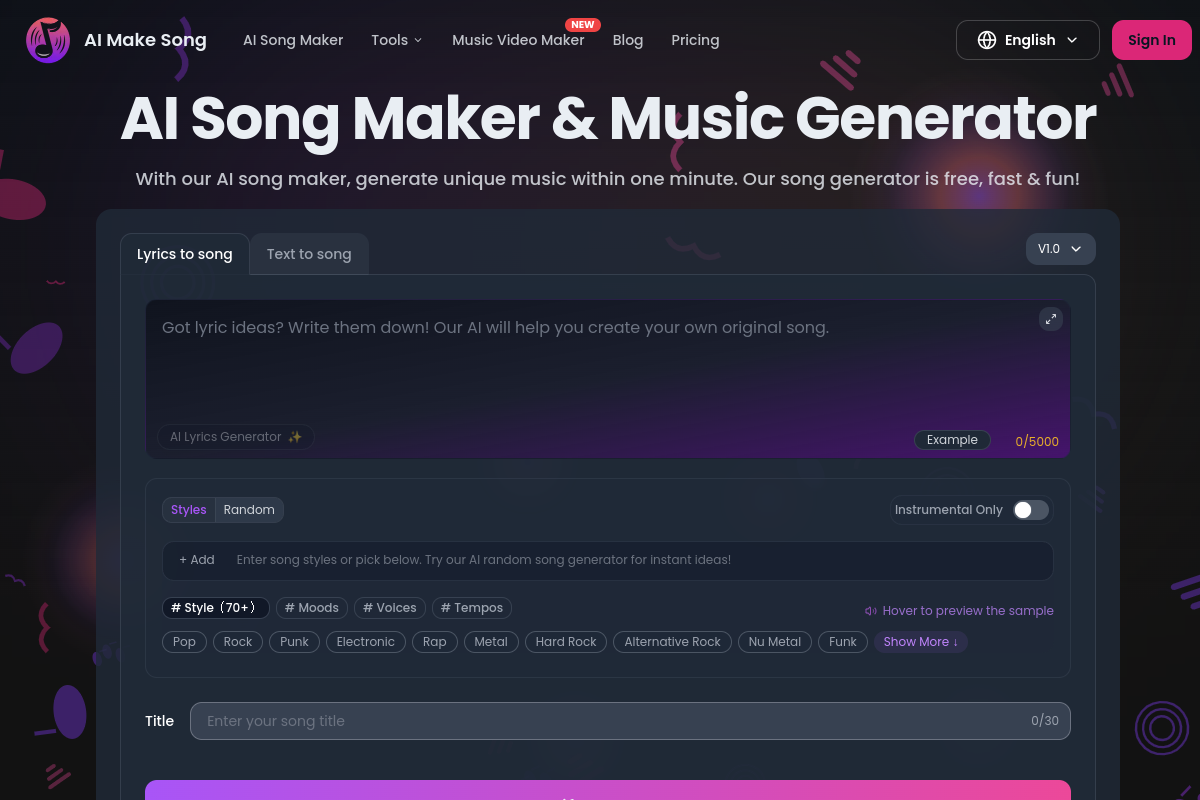

Can AI music tools replace composers?

For certain use cases, they already have: background music for YouTube videos, ambient tracks for apps, loop-based audio for games, and quick jingles for social content. For original composition, cinematic scoring, or music that needs to carry genuine emotional weight, the tools are useful for ideation but not yet for finished delivery. The honest answer is that AI music tools are excellent for users who need functional audio quickly and do not have music production skills — they are less useful for professional musicians who already know what they want.

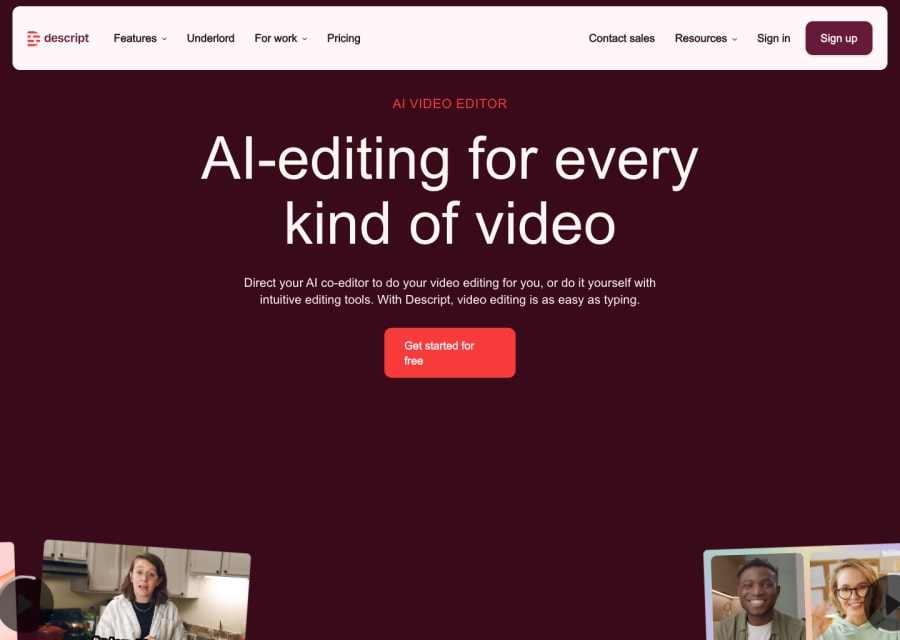

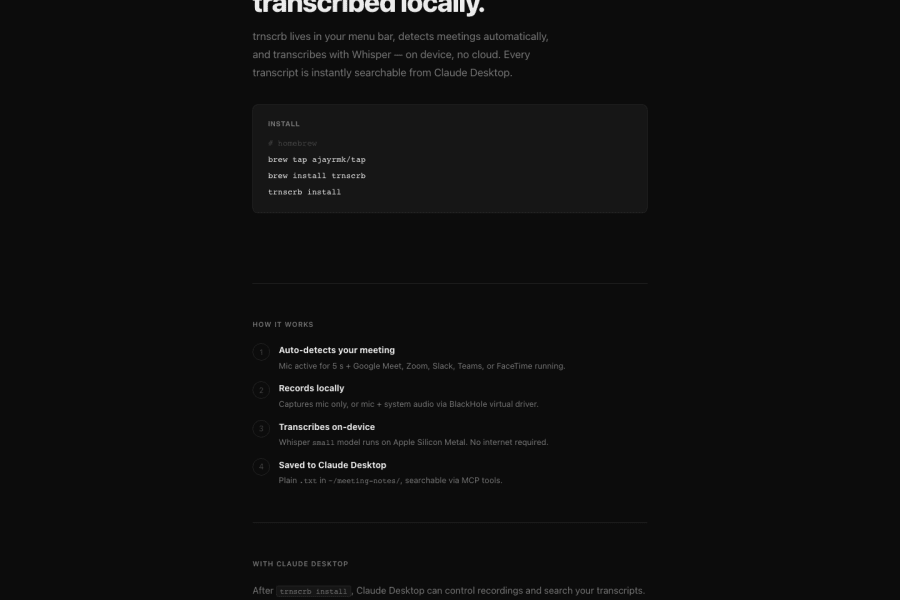

What is transcript-based audio editing and why does it matter?

Tools like Descript allow you to edit audio and video by editing the text transcript — delete a word from the transcript and it is removed from the recording, rearrange sentences and the audio rearranges with it. For anyone who has spent hours trimming an interview or removing filler words frame by frame, this is a substantial workflow improvement. It makes editing accessible to non-technical creators and significantly faster even for professionals. Transcript-based editing is arguably the most practically transformative AI audio feature for content creators.

Are there ethical concerns with AI voice tools I should know about?

Yes — and they are worth thinking through before you use these tools. Voice cloning technology can be misused to create audio that sounds like people saying things they never said. All reputable platforms require consent from the voice owner and restrict certain use cases in their terms. If you are cloning your own voice for legitimate production use, the tools work well and the ethical situation is clear. Cloning someone else's voice without consent is a different matter entirely — both legally and ethically. Use these tools responsibly.